Department of Physics

Advancing physics through excellence in teaching and research so that people can understand the world around us, inside of us, and beyond us

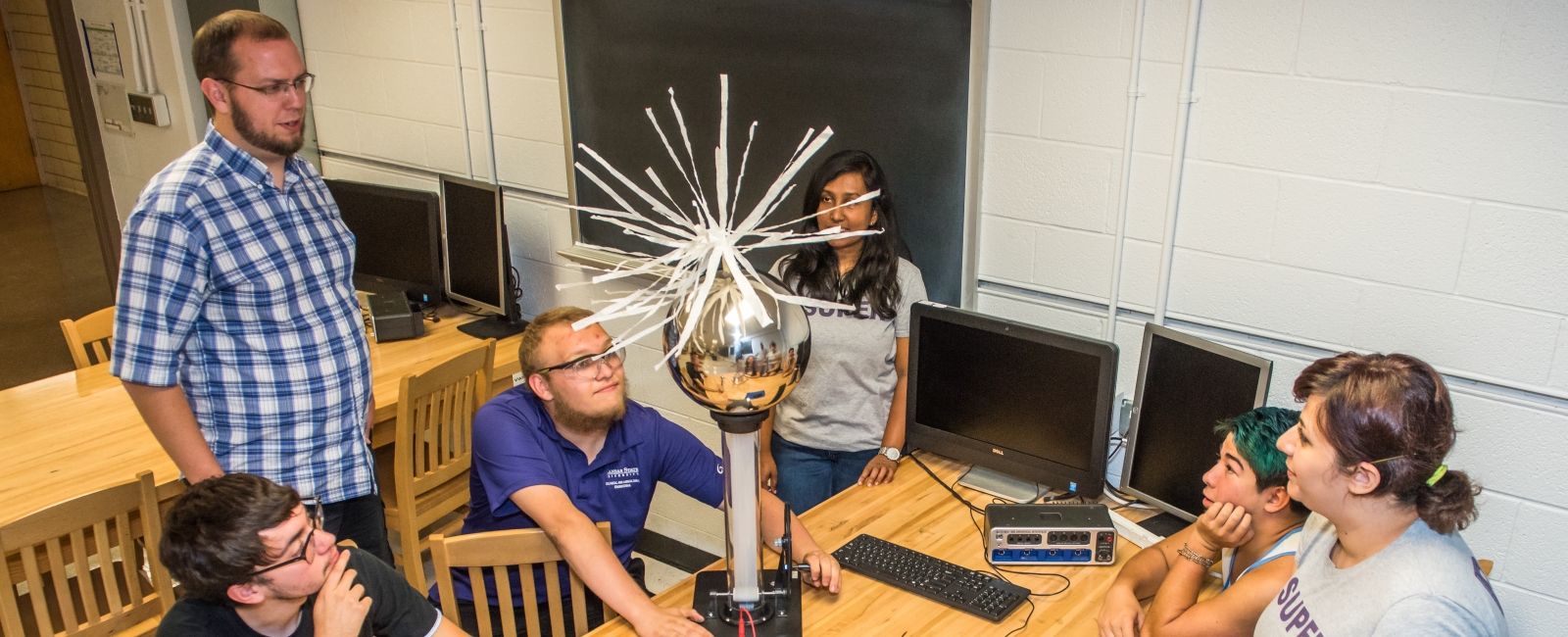

The Department of Physics at Kansas State University is built around highly productive faculty members with teaching and research expertise. Research is conducted in our department and other world-class facilities such as Fermi National Lab, CERN, DESY (German Electron Synchrotron), SLAC National Accelerator Laboratory, Lawrence Berkeley National Lab providing students a wide variety of experiences.

A full length (05:10) video is available on our K-State Physics YouTube channel.

Recent News and Announcements

-

Department Releases 2025 Newsletter : Discover What's New in K-State Physics

-

APS Lilienfeld Prize Winner Bharat Ratra highlighted in American Physical Society video

-

Physics & chemical engineering major Noah McPherson selected to McNair Scholars Program

-

K-State physicist wins prestigious Lilienfeld Prize from American Physical Society

-

K-State physicist Daniel Rolles elected American Physical Society Fellow

-

K-State alumna-funded Zeng scholarship supports international graduate student in physics

Meet Our Accomplished Faculty

Our faculty conduct research in atomic, molecular and optical physics, in condensed, soft and biological matter physics, in cosmology and high-energy physics, and physics education.